I wrote the last deep-dive on edge computing for Earth observation (EO) in September 2023. At the time, much of what I covered was potential: technology demonstrations, early hardware, and a thesis about where things were heading. Two and a half years later, the landscape has shifted enough to warrant a second edition.

This is not a rehash. The fundamentals of edge computing have not changed, but the commercial reality around it has. What was experimental then is close to becoming fully operational now. What was a niche capability is becoming an architectural choice that satellite operators, their investors, and their customers will need to make/accept or may have already made/accepted.

This piece covers an overview of the edge computing process, the use cases of edge computing, the commercial landscape, the competing architectural paths (edge processing vs. optical downlinks vs. a hybrid of both), where adoption actually stands - beyond the press releases - and where this is all heading.

Note: Throughout the piece, I will be using the terms edge computing, edge AI, onboard processing or simply edge interchangeably.

What has changed since 2023

In 2023, edge computing for EO was largely defined by a handful of institutional demonstration missions (like PhiSat) and a growing number of startups with hardware on the roadmap but not yet in orbit. PhiSat had shown that onboard AI could detect clouds. A few hardware suppliers were emerging. The conversation was about possibility. Two and a half years later, the landscape has shifted across several dimensions at once.

Operators are designing edge in from the start. Planet's next-generation Owl constellation integrates NVIDIA GPUs onboard for AI inference, designed to work in a tip-and-cue configuration with their Pelican fleet. Satellogic's "AI-First" architecture is built around continuous capture and onboard GPU processing, with multiple models running simultaneously and updateable after launch. These are not retrofits. Edge is becoming a first-order architectural decision.

The hardware ecosystem has matured. Ubotica has flown 11 missions with over 30 AI models in orbit, including live vessel detection with real-time relay to ground via inter-satellite links. Unibap is deploying SpaceCloud compute units across multiple constellations. KP Labs flew deep learning on its Intuition-1 hyperspectral satellite. New entrants like EDGX and Aethero are closing deals and shipping hardware – the supply side is no longer the bottleneck.

Foundation models are going to orbit. IBM's TerraMind.tiny, a geospatial foundation model, is now running on Planetek Italia's AI-eXpress constellation, marking an early test of whether general-purpose AI models can replace task-specific algorithms onboard satellites. This matters because it could change how often you need to update onboard software, and what kinds of tasks a single satellite can perform.

Use cases are moving from demos to contracts. Edge computing is being deployed as shared infrastructure. Loft Orbital's satellites carry multi-node compute environments where multiple customers run AI models in orbit — SkyServe's STORM platform has processed NASA JPL's wildfire and flood detection algorithms directly onboard. Defence and intelligence agencies are buying edge-enabled tasking for maritime surveillance. The question is shifting from "does it work" to "who pays, and for what."

Orbital data centres are becoming real. Starcloud launched an NVIDIA H100 GPU to orbit in November 2025 (called the most powerful processor ever flown in space) and is already running inference on Capella Space SAR imagery. Axiom Space deployed its first orbital data centre nodes in January 2026. Google and Planet announced Suncatcher, a joint programme to fly Google TPUs on Planet's satellite bus. Processing is no longer confined to the satellite that captured the data.

China is building a parallel ecosystem. The first twelve satellites of the "Three-Body Computing Constellation" launched in May 2025 and have since demonstrated distributed computing, inter-satellite networking, and deployment of 8-billion-parameter AI models in orbit — among the largest currently operating in space. STAR.VISION's STRING AI computing unit is processing EO data onboard across multiple missions with 100 Gbps laser inter-satellite links. China is making moves, as it has, in every other domain.

A new architectural debate has emerged. As optical inter-satellite links and ground networks improve, a competing thesis is gaining traction: rather than processing data onboard, beam everything down at high speed and process on the ground. The answer will likely be "both, depending on the use case" — but the tension between these approaches shapes investment decisions, system design, and who captures value in the EO chain.

Edge computing for EO is no longer a technology demonstration. It is becoming part of the invisible infrastructure that is EO; the choices being made now will define how the industry operates for the next decade.

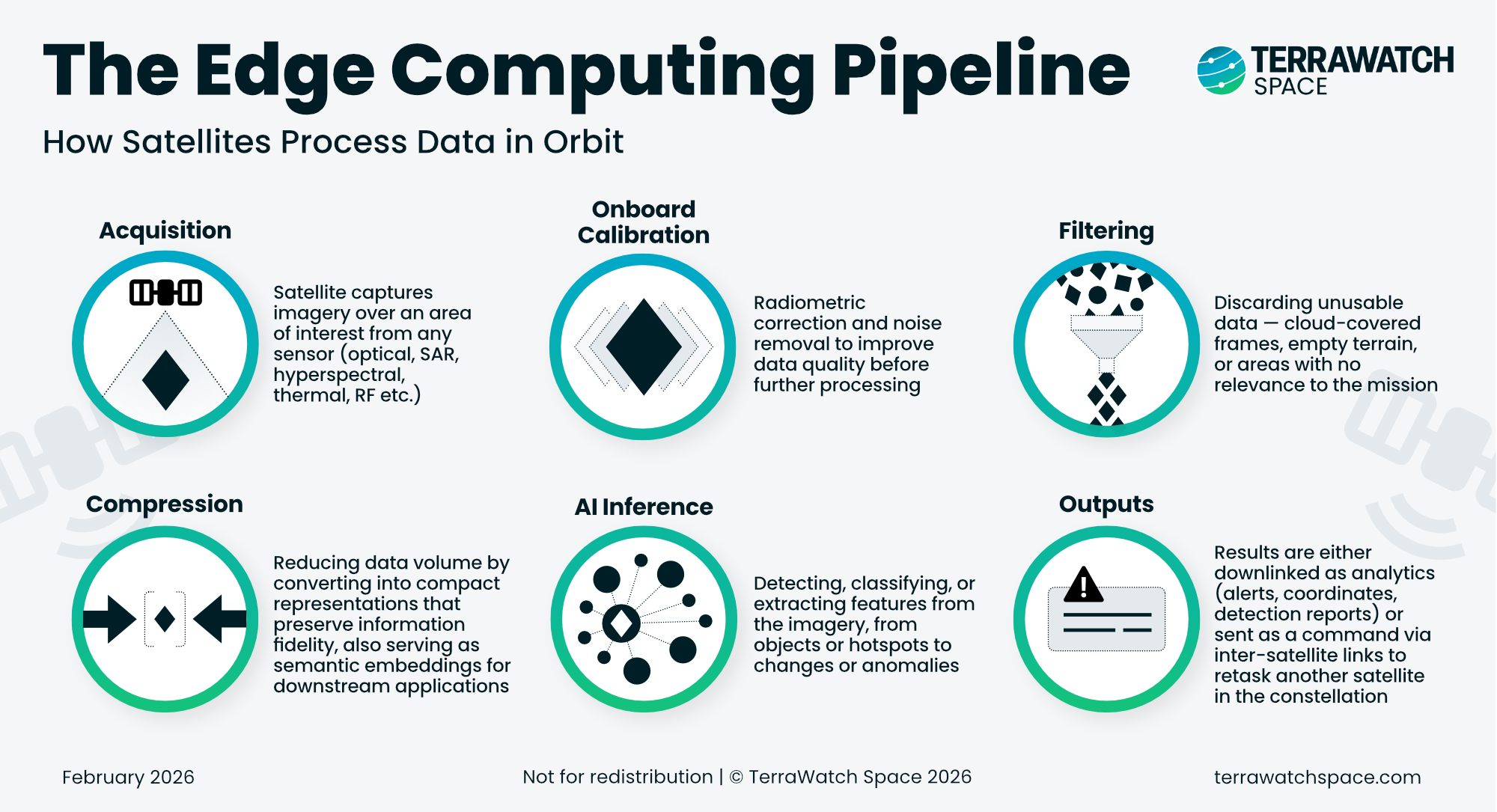

The Edge Computing Pipeline: What Actually Happens Onboard

The term "edge computing" gets borrowed liberally from telecoms and IoT, where it refers to processing data at the network periphery rather than in a centralised data centre. In EO, it means something more specific: processing satellite data onboard the spacecraft itself, before it ever reaches the ground.

But "onboard processing" can mean very different things depending on the mission. It helps to break the process down into its constituent steps, before we discuss the types of edge use case categories and the variety of applications.

Acquisition

The satellite captures imagery over an area of interest with a remote sensing instrument: optical, SAR, hyperspectral, thermal, RF or something else. This step is no different from any conventional EO mission. What happens next is where edge computing begins.

Onboard Calibration

Radiometric correction, noise removal, basic quality adjustment. This step doesn't reduce data volume significantly, but it matters more than most people appreciate. If calibration is done poorly (or inconsistently) onboard, the resulting output may not integrate cleanly with ground-processed data downstream. This is a point we will return to in the adoption section, because it is one of the underappreciated barriers to scaling edge computing.

Filtering

This is where the first significant data reduction happens. Cloud masking, discarding empty terrain, removing frames with no relevance to the tasked mission. A large portion of what the sensor captures is not useful and filtering removes it before any further processing takes place.

Compression

Reducing data volume before downlink. This can range from conventional compression to AI-driven approaches where a neural network learns to encode imagery into much smaller representations while preserving the information that matters. The most advanced versions can shrink data volume by orders of magnitude. More interestingly, the compressed representation itself can double as an embedding, a format that downstream AI models can use directly for analytics like classification or change detection without needing to decompress the image first.

AI Inference

Detection, classification, feature extraction and so on that actually contributes to the value generated from satellite data – vessels identified, hotspots flagged or changes detected. Essentially, this is where data transforms from imagery into information.

Output

What comes out of the pipeline and where it goes depends on the mission. But this is where the edge computing process branches into fundamentally different paths, each with different implications for how data reaches the ground and what it looks like when it gets there.

This is where the design choices diverge and where the strategic decisions begin. There are three fundamentally different ways to implement edge computing on a satellite. Each produces a different output, serves different customers, carries different trade-offs, and implies a different business model. Choosing between them, or combining them, is the architectural decision that will define the next generation of EO systems.

The rest of this piece breaks down those implementation pathways, the use cases they unlock, the commercial landscape of companies building across the stack, the competing architectural bets between edge processing and high-throughput optical downlinks, where adoption actually stands today, and where this is heading as foundation models and orbital data centres enter the picture.

The full piece continues with:

- Three Ways to Implement Edge Computing in EO

- Use Cases and Application Categories

- Edge Computing Commercial Landscape

- The Architectural Bets of EO Edge Computing

- The Adoption Challenge: What Has to Be True for Edge to Scale

- Outlook: Where Edge Computing Goes From Here